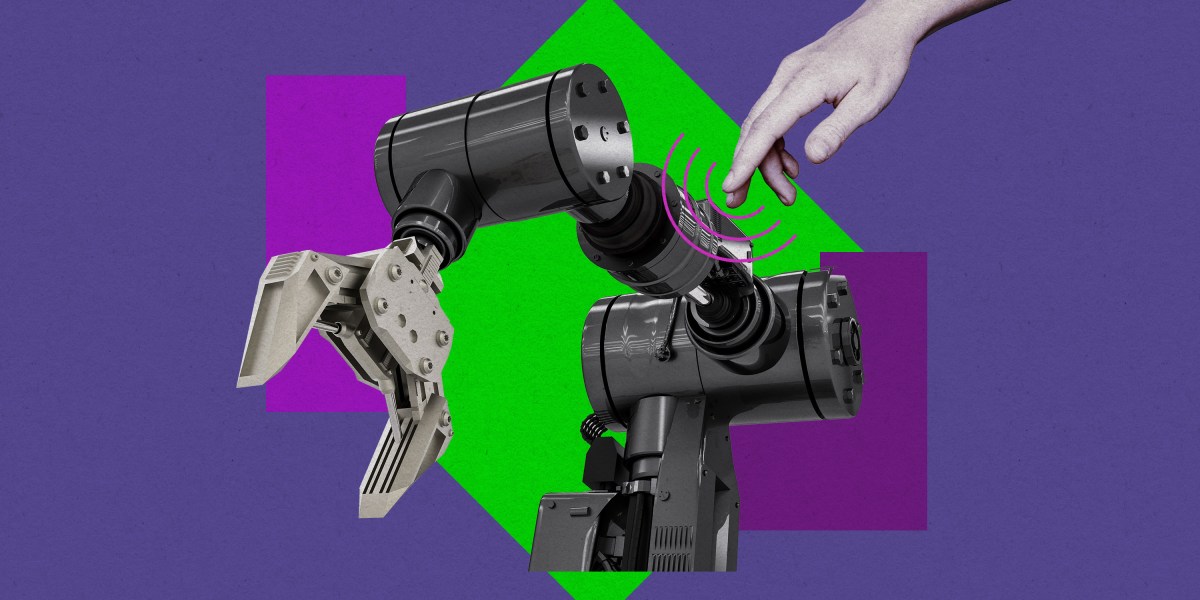

But if you press harder, you may notice a second way of sensing the touch: through your knuckles and other joints. That sensation–a feeling of torque, to use the robotics jargon–is exactly what the researchers have re-created in their new system.

Their robotic arm contains six sensors, each of which can register even incredibly small amounts of pressure against any section of the device. After precisely measuring the amount and angle of that force, a series of algorithms can then map where a person is touching the robot and analyze what exactly they’re trying to communicate. For example, a person could draw letters or numbers anywhere on the robotic arm’s surface with a finger, and the robot could interpret directions from those movements. Any part of the robot could also be used as a virtual button.

It means that every square inch of the robot essentially becomes a touch screen, except without the cost, fragility, and wiring of one, says Maged Iskandar, researcher at the German Aerospace Center and lead author of the study.

“Human-robot interaction, where a human can closely interact with and command a robot, is still not optimal, because the human needs an input device,” Iskandar says. “If you can use the robot itself as a device, the interactions will be more fluid.”

A system like this could provide a cheaper and simpler way of providing not only a sense of touch, but also a new way to communicate with robots. That could be particularly significant for larger robots, like humanoids, which continue to receive billions in venture capital investment.